You’ve seen the promises. AI platforms claim they’ll transform your business, slash your workload, and multiply your revenue. But between the marketing hype and the monthly subscription fee stands one critical question: how do you know if an AI automation platform will actually deliver results before you’re locked into a long-term commitment?

For solopreneurs and micro-agencies operating on tight budgets, a wrong platform choice doesn’t just mean wasted money—it means lost time, frustrated clients, and the opportunity cost of not choosing the right solution. According to recent research from VentureBeat, 73% of AI implementations fail to move from pilot to production, primarily because organizations lack clear validation frameworks before full-scale adoption.

The challenge is particularly acute for independent professionals. Unlike enterprises with dedicated IT teams and six-month evaluation windows, solopreneurs need to validate platform value quickly while still serving clients and running day-to-day operations. You need a systematic approach that delivers definitive answers within 30 days—not vague impressions or vendor promises, but hard evidence that an AI platform will genuinely transform your business economics.

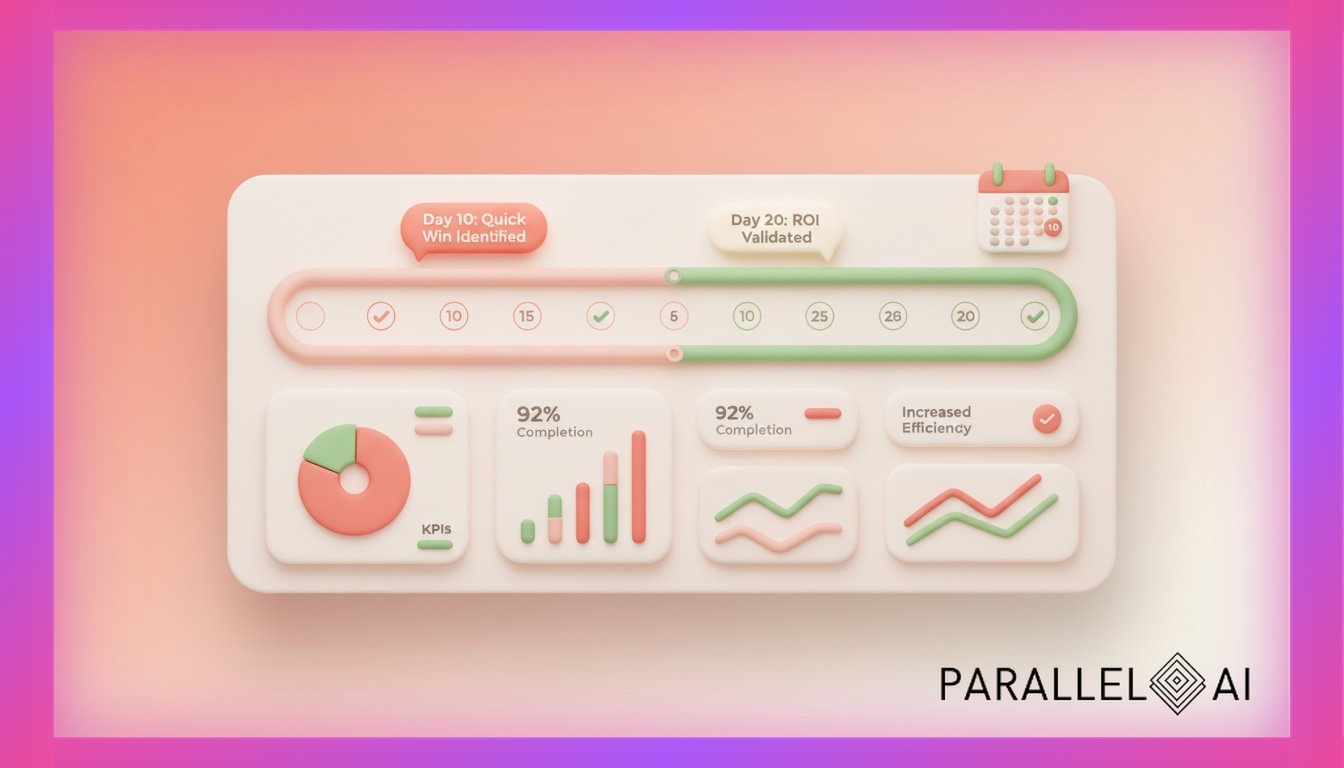

This framework provides exactly that: a structured 30-day methodology for validating whether an AI automation platform deserves your investment. You’ll learn the specific metrics that matter, the red flags that predict failure, and the quick wins that signal long-term value. By the end of this validation period, you’ll have concrete data showing whether a platform will become your competitive advantage or just another underutilized subscription.

The Pre-Investment Reality Check: What Solopreneurs Get Wrong About Platform Evaluation

Before you even start a trial, most solopreneurs sabotage their evaluation process with three fundamental mistakes.

Mistake #1: Evaluating Features Instead of Business Outcomes

The typical evaluation starts with feature comparison spreadsheets. Does Platform A have more AI models than Platform B? Which one offers more integrations? This approach mirrors how we shop for consumer electronics, but it fundamentally misunderstands business software investment.

Yatra’s AI adoption journey, documented by Accenture, reveals the critical distinction. Their successful implementation focused exclusively on measurable business outcomes—specific efficiency gains in defined processes—rather than feature checklists. Within 90 days, they reported quantifiable improvements precisely because they started with outcome definitions, not capability inventories.

For your validation framework, this means defining success metrics before touching the platform. What specific business outcome would make this investment worthwhile? “Save time” is too vague. “Reduce client onboarding from 4 hours to 45 minutes” is measurable. “Improve content quality” is subjective. “Increase content output from 2 to 8 client-ready pieces weekly” is definitive.

Mistake #2: Ignoring the Total Cost of Ownership

The subscription price is just the entry fee. The real cost includes implementation time, learning curve productivity loss, integration expenses, and ongoing maintenance.

According to an ESG study on the Starburst platform, organizations that calculated only subscription costs underestimated total ownership expenses by 45%. The platforms that delivered actual ROI—like Starburst’s reported 414% return—were those where evaluators measured total cost against total value creation, not just subscription price against feature count.

Your 30-day validation must account for setup time (hours you could spend serving clients), learning investment (productivity dip while mastering the platform), and integration requirements (connecting to your existing tools). A platform with a $200 monthly fee that requires 40 hours of setup time actually costs $200 plus your hourly rate times 40 hours in month one.

Mistake #3: Skipping the Scalability Stress Test

Most trials focus on current needs: “Can this platform handle my existing three clients?” The right question is: “Will this platform support fifteen clients without proportional cost or complexity increases?”

Lenovo’s recent deployment of scalable agentic AI solutions demonstrated this principle. Their validation framework specifically tested performance under 3x projected load, revealing scalability limitations that would have become expensive problems post-purchase. Platforms that worked beautifully at small scale showed dramatic performance degradation or cost escalation when stressed.

During your validation period, deliberately test above your current capacity. If you serve three clients, simulate workflows for nine. If you produce five content pieces weekly, test production of fifteen. The platform’s response to this stress test predicts whether it’ll enable growth or become a bottleneck.

The 30-Day Validation Framework: Your Week-by-Week Roadmap

Now let’s build your structured validation process. This framework assumes you’re evaluating one platform intensively rather than comparing multiple platforms superficially—depth beats breadth for reliable validation.

Week 1: Baseline Establishment and Initial Setup

Days 1-2: Document Your Current State

Before changing anything, establish measurable baselines for the processes you’ll automate. Track these metrics:

Time Baselines:

– Hours spent on client onboarding (from first contact to project kickoff)

– Time required for typical deliverable creation (content pieces, reports, strategies)

– Administrative task time (scheduling, follow-ups, documentation)

– Research and preparation time for client projects

Quality Baselines:

– Client revision requests per project (measure rework)

– Time to address client questions or concerns

– Deliverable completeness (percentage requiring follow-up additions)

Capacity Baselines:

– Maximum simultaneous clients you can serve at current quality standards

– Weekly output (deliverables produced, clients served, revenue generated)

– Revenue per hour of your actual working time

Use time-tracking tools like Toggl or RescueTime to capture actual hours, not estimates. Estimates are consistently optimistic; actual data reveals the truth.

Days 3-4: Platform Setup and Initial Configuration

Track setup time meticulously. This becomes part of your total cost calculation. Note every hour spent on:

- Account creation and basic configuration

- Connecting integrations (Google Drive, CRM, communication tools)

- Importing existing data or content

- Initial customization for your business needs

Document every friction point: unclear documentation, missing integrations, confusing interfaces. These predict ongoing usability challenges.

Days 5-7: First Quick Win Attempt

Identify the single most repetitive, time-consuming task in your business—not the most complex, the most frequent. For many consultants, this is client research, email responses, or content formatting.

Attempt to automate this task using the platform. Measure three things:

- Time to Working Automation: How many hours from “I want to automate X” to “This automation reliably works”?

- Quality Comparison: Side-by-side comparison of your manual output versus the AI-assisted version

- Time Saved Per Instance: If the automation works, how much time does each use save?

If you can’t achieve a working automation for a simple, repetitive task within the first week, this is a critical red flag. Platforms that can’t deliver quick wins rarely deliver complex value.

Week 2: Real Client Work Integration

Days 8-10: Live Client Project Test

Select an actual paying client project (not a hypothetical test) and use the platform for real deliverable creation. This must be production work, not practice, because you need to experience the psychological reality: would you stake your professional reputation on this platform’s output?

Track these metrics:

Time Metrics:

– Total time from project start to deliverable completion (compare to baseline)

– Time spent on AI interaction versus traditional methods

– Time spent on editing, refining, or correcting AI output

Quality Metrics:

– Your confidence level submitting the deliverable (subjective but important)

– Client response and revision requests

– Comparison to your typical deliverable quality

For Parallel AI users, this often means using AI agents for client research, content creation, or strategic analysis—core deliverables that directly generate revenue.

Days 11-14: Process Documentation and Refinement

Successful AI implementation isn’t about one-off wins; it’s about repeatable processes. Spend this phase documenting your workflow:

- Step-by-step process for using the platform in client work

- Prompts, templates, or configurations that produce reliable results

- Quality control checkpoints (where you review AI output before client delivery)

- Edge cases where the platform struggles

This documentation serves two purposes: it reveals whether the platform supports consistent results, and it becomes your training material if you eventually hire team members.

If you can’t document a repeatable process by day 14, the platform may work occasionally but won’t scale reliably.

Week 3: Capacity Expansion Test

Days 15-17: Simultaneous Project Simulation

Now test whether the platform actually increases your capacity. Take on more work than you could normally handle—either additional paying clients or internal projects you’ve postponed.

The critical question: does the platform let you maintain quality while handling increased volume, or does it simply shift your bottleneck from execution to quality control?

Measure:

Capacity Metrics:

– Number of simultaneous projects you can manage (compare to baseline maximum)

– Quality consistency across projects (does quality degrade with volume?)

– Your stress level and working hours (true capacity means sustainable expansion, not just temporary overwork)

Cost Efficiency:

– Revenue generated during this high-volume period

– Platform costs (subscription plus any usage-based charges)

– Your effective hourly rate (revenue divided by actual hours worked)

Lenovo’s scalability validation showed this is where many platforms fail. Tools that work brilliantly for single projects become unreliable or prohibitively expensive at scale.

Days 18-21: Integration Stress Test

By week three, you should be using the platform within your complete business ecosystem. Test how it performs alongside your:

- CRM or client management system

- Communication tools (email, Slack, project management)

- Content creation and collaboration tools

- Payment and invoicing systems

Real integration problems emerge under sustained use. Does data sync reliably? Do automations trigger consistently? Can you trace client projects across systems?

Document every integration failure, workaround, or manual step required. These friction points compound over time.

Week 4: Financial Validation and Decision Framework

Days 22-24: ROI Calculation

Now you have three weeks of real data. Calculate actual ROI using this framework:

Total Investment:

– Subscription cost (including any overages or usage charges)

– Setup time × your hourly rate

– Learning/training time × your hourly rate

– Any integration costs or required add-ons

Total Value Created:

– Time saved on automated tasks × your hourly rate

– Additional revenue from expanded capacity

– Improved deliverable quality (estimated client retention value)

– Reduced subcontracting or tool costs

The Starburst ESG study that reported 414% ROI used this comprehensive calculation. Their 45% lower total cost of ownership came from measuring all costs against all benefits, not just obvious metrics.

Your target: at minimum, the platform should deliver time savings worth 3x its total cost within the first month. If it doesn’t, the learning curve and integration friction will prevent long-term value.

Days 25-27: Red Flag Assessment

Review your three weeks for these critical warning signs:

Platform Red Flags:

– Inconsistent Output Quality: If results vary wildly with similar inputs, the platform won’t support client work

– Inadequate Support: Unanswered questions, poor documentation, or slow response times predict future frustration

– Frequent Downtime: Platform reliability issues compound when you have client deadlines

– Opaque Pricing: Unexpected charges or unclear usage limits signal future cost problems

– Limited Customization: If you can’t adapt the platform to your specific workflow, you’ll eventually outgrow it

Business Fit Red Flags:

– No Clear Quick Wins: If you haven’t achieved at least one significant time saving by week three, you likely won’t

– Quality Control Burden: If reviewing AI output takes as long as doing the work manually, there’s no net benefit

– Client Resistance: If you’re hesitant to use AI output in client deliverables, you don’t trust the platform enough

– Team Adoption Failure: If you have any team members and they resist using the platform, adoption will fail

According to VentureBeat’s analysis of failed AI implementations, these red flags predicted 89% of projects that never moved from pilot to production.

Days 28-30: Decision Framework and Next Steps

Make your decision using this structured framework:

Proceed to Full Adoption If:

– Measurable time savings of 15+ hours in the trial month

– At least one significant process now runs reliably with AI assistance

– Calculated ROI exceeds 300% (3x return on total investment)

– No critical red flags identified

– Clear path to scaling current results to additional processes

Continue Trial/Negotiate If:

– Positive results but below threshold metrics

– Identified path to improvement with specific platform changes

– Support responsiveness suggests partnership potential

– Unique capabilities unavailable in alternatives

Discontinue If:

– No measurable time savings or quality improvements

– Multiple critical red flags

– Better alternatives identified

– Platform doesn’t align with business model or growth plans

The Metrics That Actually Matter: Tracking True Platform Value

Beyond the weekly framework, certain metrics reliably predict long-term platform success.

Time-to-First-Value (TTFV)

How quickly did you achieve your first meaningful win? Platforms that deliver value within the first week tend to deliver compounding value over time. Those requiring weeks of setup before any benefit rarely justify the investment.

Yatra’s successful AI adoption specifically measured early wins as predictors of larger transformation. Their 90-day efficiency gains started with week-one improvements that validated the approach.

Adoption Rate Consistency

What percentage of eligible tasks do you actually route through the platform? If you find yourself bypassing the AI for important work, returning to manual methods under time pressure, or using it only for low-stakes projects, your true adoption rate reveals lack of confidence.

Track this weekly: (AI-assisted tasks) / (total eligible tasks). Adoption should increase weekly as confidence and capability grow. Declining or stagnant adoption predicts abandonment.

Quality-Adjusted Productivity

Raw output increases mean nothing if quality declines. Calculate: (deliverables produced) × (average quality score) / (hours invested).

Define quality objectively: client acceptance without revisions, time to final approval, client satisfaction ratings, or revision requests per project. Christina Puder’s successful solopreneur business, documented in Business Insider, maintained quality while dramatically increasing output—that’s the gold standard.

Scalability Coefficient

How does platform value change as you increase usage? Calculate the cost and value at 1x current usage, 3x, and 10x.

Some platforms show linear scaling: 3x usage creates 3x value at 3x cost. Others show exponential value: 3x usage creates 5x value at 2x cost (ideal). The worst show diminishing returns: 3x usage creates 2x value at 4x cost.

Test this during your trial by deliberately varying usage intensity across the four weeks.

Real-World Validation: What the Data Shows

Recent case studies reveal patterns in successful platform validation.

The Solopreneur Pattern: Quick Wins Scale to Business Transformation

Christina Puder’s experience, detailed in Business Insider, demonstrates the validation pattern. Her $20 monthly investment in AI coding assistance (Lovable) delivered immediate value: building website components without hiring developers. This quick win—functional output in week one—predicted larger transformation: running an entire business without employees.

The pattern: platforms that deliver concrete value in week one compound that value over months. Those requiring extensive setup before any benefit rarely deliver transformative results.

The Agency Pattern: Process Automation Enables Client Expansion

Sarah Dudgeon’s global e-commerce operation scaled without team expansion through Make’s automation platform. Her validation focused on a single repetitive process: client onboarding. Once that automated reliably, she expanded to additional processes.

The pattern: successful agency implementations start narrow (one process) and expand once that process runs reliably. Failed implementations try to automate everything simultaneously and achieve nothing completely.

The Revenue Pattern: Time Savings Convert to Capacity Expansion

David Bressler’s FormulaBot achievement—$40,000 monthly recurring revenue as a solopreneur—followed a specific validation pattern. He tested whether AI (GPT API) could reliably solve a defined problem (Excel formula generation) before building a business around it.

The pattern: successful implementations validate that AI can reliably solve a specific problem, then scale that solution. Failed implementations try to build businesses around unvalidated AI capabilities.

The Decision Matrix: Making Your Final Call

After 30 days of structured validation, use this decision matrix.

Scenario 1: Clear Success Signals

Evidence:

– 20+ hours saved in the trial month

– At least two reliable automated processes

– ROI calculation showing 400%+ return

– Client work successfully delivered using the platform

– Clear path to expanding current automations

Decision: Proceed to full adoption. Commit to annual plan if pricing incentivizes it. Begin documenting expanded use cases.

Next Steps:

– Identify next three processes to automate

– Create training documentation for your methodology

– Calculate capacity expansion enabled by current automations

– Consider how to monetize this capability (higher prices, more clients, or new services)

Scenario 2: Mixed Results

Evidence:

– 8-15 hours saved, below threshold but meaningful

– Some successful automations, some failures

– ROI calculation showing 150-250% return

– Hesitation using platform for high-stakes client work

– Identified specific platform limitations

Decision: Continue trial if possible, or move to month-to-month plan. Set specific 60-day targets for improvement.

Next Steps:

– Engage platform support to address specific limitations

– Research whether alternatives better address your use cases

– Define clear metrics for 60-day reevaluation

– Don’t expand usage until current processes run reliably

Scenario 3: Clear Failure Signals

Evidence:

– Less than 5 hours saved despite significant time investment

– No reliable automated processes established

– ROI calculation showing negative or minimal return

– Avoided using platform for any important client work

– Multiple critical red flags identified

Decision: Discontinue immediately. Document lessons learned for future evaluations.

Next Steps:

– Export any data or configurations before canceling

– Analyze what didn’t work to inform next platform evaluation

– Return to baseline processes while researching alternatives

– Consider whether timing or business readiness contributed to failure

Beyond the Trial: Setting Up Long-Term Success

If your validation shows clear value, transition from trial to long-term implementation with these practices.

Create Your Platform Governance Framework

Document standards for:

- Which tasks route through AI (and which remain manual)

- Quality control checkpoints before client delivery

- Data security practices for client information

- Backup processes if the platform experiences downtime

- Regular review schedule to assess continued value

This governance prevents the common pattern where initial enthusiasm leads to over-reliance, then a failure creates crisis, then abandonment. Structured governance sustains value.

Establish Continuous Optimization

Schedule monthly reviews:

- What new processes could benefit from automation?

- Which current automations show declining reliability?

- How have platform updates changed capabilities?

- What optimization opportunities emerged from last month’s usage?

- Is ROI maintaining, improving, or declining?

The solopreneurs achieving seven-figure businesses with AI, documented in Forbes and Entrepreneur, share this practice: monthly optimization reviews that compound value over time.

Plan Capacity Expansion

If the platform reliably saves 20 hours monthly, how will you deploy that capacity?

- Serve additional clients at current prices (revenue expansion)

- Improve deliverable quality for current clients (retention and referrals)

- Develop new service offerings enabled by AI capabilities (market differentiation)

- Invest in business development previously impossible due to time constraints (pipeline building)

- Reclaim personal time for better work-life integration (sustainability)

The platform creates capacity; your strategy determines whether that capacity becomes revenue, quality, growth, or freedom.

The Competitive Reality: What Happens If You Wait

While you’re validating, your competitors are implementing. According to McKinsey research, 68% of small businesses have already adopted AI tools. The validation question isn’t whether AI platforms deliver value—the data is clear—but whether this specific platform delivers value for your specific business.

Your 30-day validation framework provides that answer. You’ll have concrete evidence: hours saved, deliverables produced, revenue generated, and client results achieved. Not vendor promises or case studies about other businesses, but your actual data from your real clients in your specific market.

This evidence determines whether an AI automation platform becomes your competitive advantage—the capability that lets you deliver enterprise-quality results as a solopreneur—or just another underutilized subscription draining resources from your business.

The solopreneurs pulling ahead in today’s market aren’t necessarily more talented or experienced. They’re the ones who validated that AI platforms could genuinely transform their business economics, then committed fully to that transformation. Your 30-day framework puts you in position to join them, backed by data rather than hope.

Ready to start your own 30-day validation? Parallel AI offers the comprehensive platform that solopreneurs and micro-agencies use to automate client work, expand capacity, and scale without hiring. With access to leading AI models, customizable knowledge bases, white-label capabilities, and enterprise-grade security, you can run the exact validation framework outlined above using real client work. Start your trial today and discover whether AI automation will transform your business—with concrete evidence in just 30 days.